SOFTWARE ENGINEERING IS NOT DEAD – AI Is Just Rewriting the Rules

AI is not replacing engineers. It is compressing the number of engineers needed to ship the same outcome. That distinction matters enormously — for careers, for companies, and for the next decade of software

The Compression, Not Elimination, of Engineering

The spreadsheet did not kill accounting. It changed how many accountants a company needed to manage the same workload. Software engineering is undergoing the same structural shift — accelerated by AI tools that genuinely multiply individual output.

AI shifts the bottleneck from typing to thinking, and from implementation to verification. Code production gets cheaper; reliable, maintainable, secure software does not.

McKinsey’s 2025 Technology Trends Outlook documents 20–45% productivity gains in engineering teams using AI tools. A feature that once needed a team of five now gets shipped by a team of two or three. Demand for software is accelerating, not contracting. What is contracting is the ratio of engineers to output.

Key shift AI increases the premium on engineering discipline — specification clarity, fast verification, and architectural judgment — not just raw coding speed.

Tech Layoffs: AI Is Partly a Scapegoat

Over 127,000 U.S. tech workers were laid off in 2025. CEOs cited AI. The real story is messier — and more honest engineering leaders need to say it clearly.

“Instead of saying ‘we miscalculated two or three years ago,’ companies now have a convenient scapegoat — AI.” — Prof. Florian Stephany, Oxford Internet Institute (CNBC, Oct 2025)

The Three Real Drivers

- COVID overhiring correction: Block grew from 3,800 to 10,000+ employees between 2019–2025. Salesforce, Meta, and Google all expanded aggressively during the pandemic. IBM’s CEO called the subsequent cuts ‘a natural correction.’ That bubble had to deflate — AI or not.

- Investor pressure on margins: Profitable companies restructuring to signal efficiency to Wall Street. The layoffs signal seriousness, not AI-driven necessity.

- Genuine AI efficiency gains — but narrower than claimed: Salesforce cut 4,000 support roles via AI agents. But Klarna, which credited AI for shrinking its workforce 40%, later had to rehire because the AI couldn’t handle real complexity. Harvard Business Review survey research also finds some ‘AI-driven’ layoffs are driven by anticipation of future capability — not current performance.

The most dangerous pattern: companies removing senior engineers before making their codebases AI-navigable, then wondering why AI tools underperform. Verification debt backlash (more bugs, more rework) and security exposure follow. AI does not replace engineering culture — it needs one to work.

How Software Development Has Evolved

Every major methodology shift was a direct response to pain. The through-line across all eras: feedback loops got shorter, and intent became more explicit and machine-verifiable.

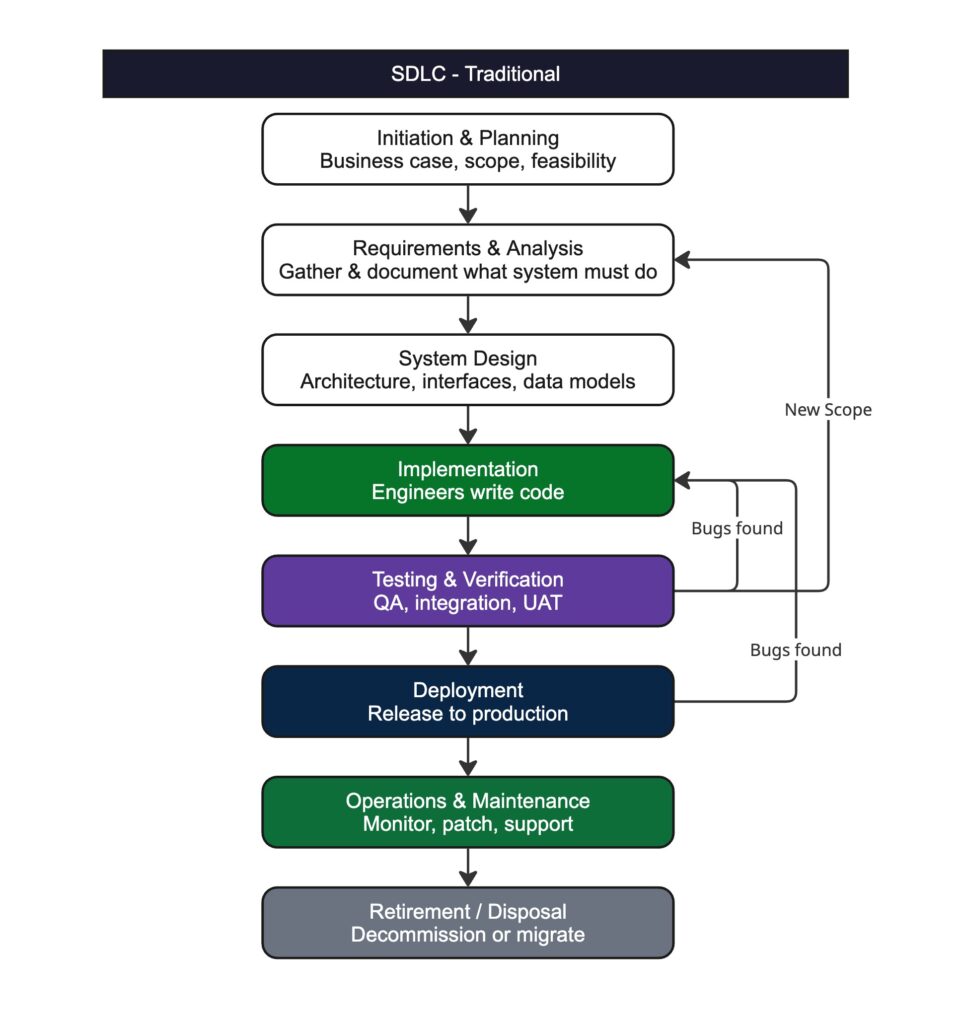

Era 1 · Traditional SDLC (1970s – 2000s)

What it is: Sequential, phase-gated development. Requirements → Design → Implementation → Testing → Deployment → Maintenance → Retirement. Each phase must formally close before the next opens. Critically, SDLC covers the full system lifecycle — even when AI compresses the coding phase, all other phases still require human engineering judgment.

Core loop: Months-long feedback cycles. A requirement error discovered in testing rewinds everything upstream.

Best for: Predictable, stable, compliance-heavy systems — government, payroll, billing, defence.

Limitation: Rigid. Months between idea and validated feedback. Poor fit for iterative modern software.

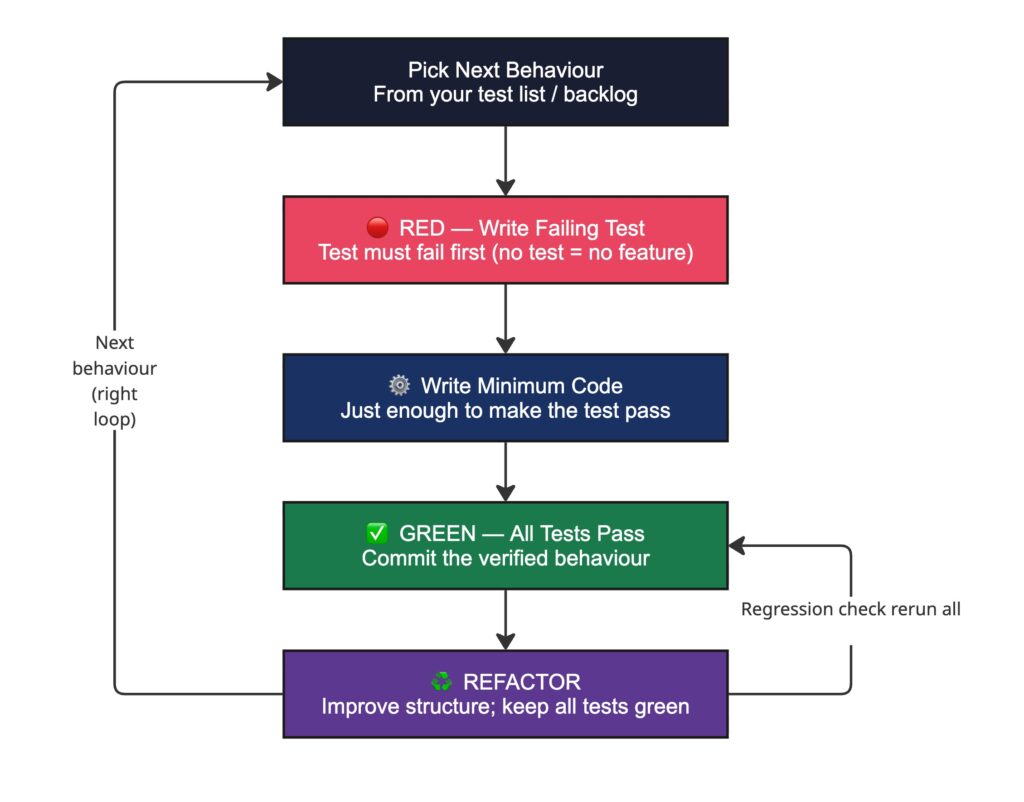

Era 2 · Test-Driven Development (2000s – 2020s)

What it is: Write the failing test first. Write the minimum code to make it pass. Refactor. The test is the specification — an executable contract. Verification is pulled to the front of implementation.

Core loop: Red → Green → Refactor. Hours-long cycles. Every change is instantly verified against intent.

Best for: Any team needing confident refactoring, regression safety, and living documentation of behaviour.

Why it matters in the AI era: TDD’s discipline — verification-first, machine-checkable specs — is the exact foundation that makes AI agents effective. Teams with strong TDD culture are significantly better positioned for agentic workflows.

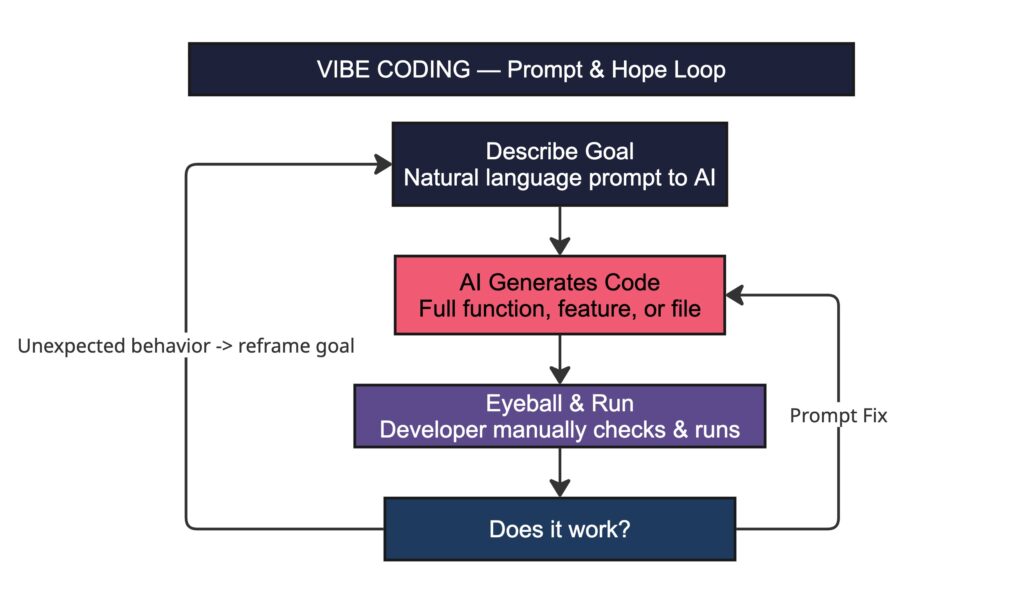

Era 3 · Vibe Coding (2023 – 2025)

What it is: Prompt AI with a goal in natural language. Accept the generated code. Iterate with more prompts. Coined by Andrej Karpathy, February 2025. The core idea: delegate implementation to the model and steer via quick feedback.

Core loop: Prompt → Generate → Eyeball → Prompt again. No formal spec. No structured verification. Fast but unverified.

Best for: Prototypes, solo demos, throwaway scripts, and early exploration where long-term maintenance is irrelevant.

Where it collapses: Architecture becomes an afterthought after ~2 weeks. Research shows AI-assisted vibe coding increases code complexity by ~41% and static analysis warnings by 30%. No spec means no shared source of truth for the agent, the developer, or the reviewer.

Era 4 · Spec-Driven Development (2025 – Present)

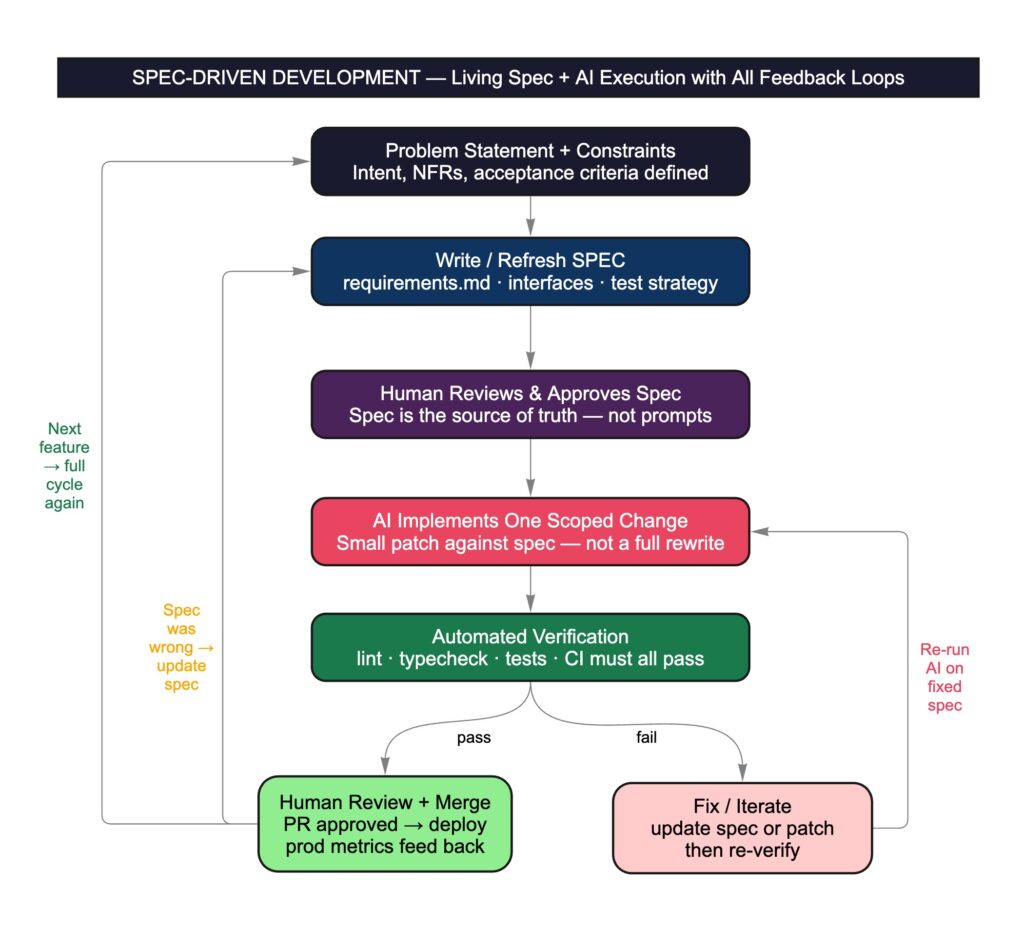

What it is: Write a living, machine-readable specification first — requirements, acceptance criteria, interfaces, test strategy. AI agent executes against the spec in small, scoped steps. Human governs intent, not code lines. Coined across GitHub Spec Kit, Amazon Kiro, and Thoughtworks by mid-2025.

Core loop: Intent → Spec (human-reviewed) → AI implements one scoped change → Automated verification (lint + tests + CI) → Fix or human review & merge → Spec updated on intent change.

Three levels of SDD (Thoughtworks):

- Spec-first (write before coding)

- Spec-anchored (evolve spec over time)

- Spec-as-source (spec is the primary artefact; code is derived).

Best for: Production systems, enterprise teams, any codebase that will be maintained and evolved over years.

Why it works: Specs version alongside code. Context loss between sessions is resolved by the spec. Human oversight is preserved at decision points, not at every line. The spec tames ‘prompt entropy’ at scale.

The Hard Data: AI Fails on Enterprise Codebases

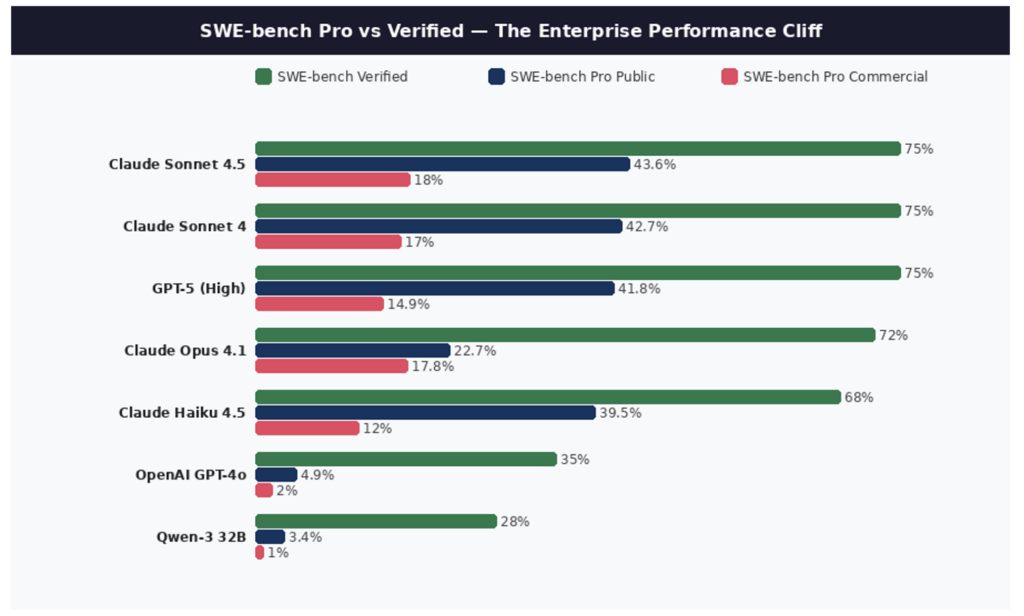

The number every engineering leader needs to internalize: 23%. That is the top resolve rate on Scale.ai’s SWE-bench Pro — the most rigorous, contamination-resistant AI coding benchmark ever built.

SWE-bench Pro uses 1,865 tasks from 41 professional repos, including 18 private proprietary codebases from real startups. Tasks require an average of 107 lines of code across 4+ files. These are genuine enterprise problems. The world’s best models solve only about one in four.

For comparison: those same models score 70%+ on SWE-bench Verified — the older benchmark used in most AI marketing materials. That gap is not a rounding error. It is structural.

Why the Performance Cliff Exists

- Context fragmentation: rules and invariants live in scattered files, unwritten conventions, and tribal knowledge agents cannot access.

- Slow or unreliable feedback: flaky tests, slow builds, non-reproducible environments cause agents to loop or hallucinate fixes.

- Ambiguous specs: issues are underspecified without follow-up, and ‘correctness’ depends on product intent that lives only in human memory.

- Verification gaps: if tests, linters, and observability are weak, AI generates more code than the system can safely validate.

- Long-horizon breakdown: multi-file, cross-module changes — the defining task of enterprise work — exceed current agent context reliability.

| The precise claim supported by evidence Current models are relatively good at generating code. They are still weak at reliably navigating, integrating, and validating changes inside complex repositories with imperfect feedback loops. |

A further signal from real-world RCT research: experienced developers working on their own familiar codebases were on average ~19% slower when allowed to use AI tools — because AI output required substantial review and correction. The slowdown came from prompting, waiting, reviewing, and fixing context gaps. AI accelerates output in well-structured environments; it increases overhead in poorly-structured ones.

The 8 Pillars of an Agent-Ready Codebase

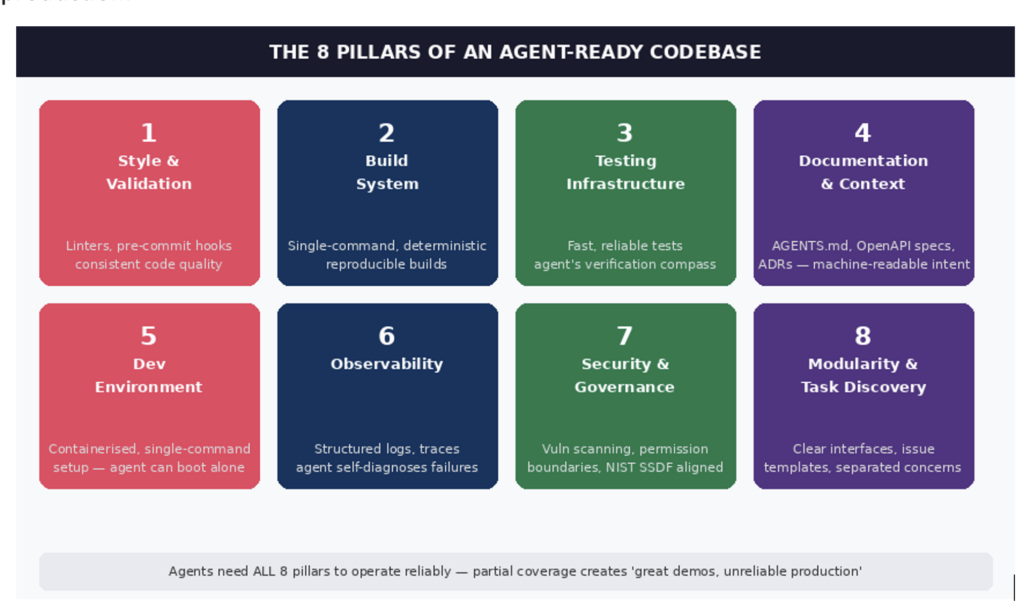

If large codebases are the hard part, then ‘AI doesn’t kill software’ becomes a governance and infrastructure story. Factory.ai’s Agent Readiness framework — validated at EY, Groq, Bilt and others — identifies eight technical pillars that determine whether an AI agent can do real work in your repo, or just generate expensive noise.

The core insight: agents cannot compensate for missing infrastructure the way humans can. Humans improvise around broken builds, absent docs, and opaque conventions. Agents cannot. That gap is why most teams see ‘great demos, unreliable production.’

The Transformation Roadmap

A pragmatic sequence to make codebases AI-ready:

- Assess: Score repositories across the 8 pillars. Identify the biggest time sinks: unreliable builds, missing tests, absent docs, weak security gates.

- Stabilise feedback loops first: Reproducible builds, hermetic environments, CI that fails loudly and quickly. No agent can work reliably without deterministic feedback.

- Push verification left: Expand ‘definition of done’ to lint, type-checks, unit and integration tests, and security scanning. NIST SSDF explicitly positions secure development practices as fundamental — not optional.

- Adopt SDD for complex changes: Spec-driven workflows address context loss. Apply selectively — high-quality analysis warns it can feel ‘overkill’ for small tasks if not calibrated.

- Measure with outcomes, not vibes: DORA program metrics (deployment frequency, lead time, change failure rate) predict organisational performance and reflect delivery outcomes.

- Govern AI like a production dependency: AI assistance can increase insecure coding in some settings. Policies, guardrails, prompt-injection awareness, and clear review responsibilities are non-negotiable.

| The compounding return Better validation → more productive agents → more work handled autonomously → engineers freed to improve the environment further. One senior engineer who defines rigorous standards can now scale their expertise across an entire organisation. This is the infrastructure investment most companies are skipping while they benchmark models. |

The Next Six Months — What the Evidence Says

For at least the near term, senior software engineering talent is irreplaceable. SWE-bench Pro is clear: frontier models fail on roughly 75–80% of real enterprise problems in proprietary codebases. The gap between ‘AI performance on benchmarks’ and ‘AI performance on your specific codebase’ is closed by engineers who understand architecture, write precise specifications, and govern AI output with expert judgment.

Who is at risk

Engineers whose primary value was mechanical code production — translating well-understood requirements into straightforward implementations. AI handles that reliably for well-scoped, well-specified problems. Research also shows junior hiring is declining faster than senior employment changes — the entry point is compressing.

Who thrives

Engineers who operate at a higher layer of abstraction: defining what should be built, how it should be verified, how the system stays navigable by both humans and agents over time. Context engineering, spec authorship, agent orchestration, and evaluation infrastructure are skills that did not exist three years ago.

What companies must do

The companies that win will not be those that eliminate engineering fastest. They will be those that invest most deliberately in making engineering environments AI-ready — treating codebase infrastructure as the strategic asset it now is. Rushing to AI without the 8 pillars in place is not cost-cutting. It is technical debt accumulation at machine speed.

| “The future belongs to engineers who curate the environment where software is built — who set constraints, build automation, and introduce systematic rigour into their development processes.” — Chamith Madusanka, ‘Making Codebases Agent-Ready’ (Dec 2025) |

The headline is simple: software is not dying, but smaller teams will ship more software than larger teams did three years ago. That compression is permanent. The question for every engineering leader is not whether to engage with it — it is whether to shape it deliberately or be shaped by it reactively.

Comments

Leave a Comment

No comments yet. Be the first to comment!

Written by Prasanth Sai

Gen AI Product Leader · Leads AI Applications and Search at eGain

I partner with PMs and engineers to drive production adoption of AI across Fortune 500 enterprises in the US and Europe. IIT Bombay alumnus; previously co-founded Selekt.in and built ChatGen.ai. The thesis I evangelize: knowledge is the harness for AI applications.