Enterprise AI Operating System in 2026: Comparing the Best

The artificial intelligence landscape is entering a new phase. We are moving beyond the era of conversational copilots — into the age of the Enterprise AI Operating System. For years, the modern enterprise has relied on a patchwork of software: ERP for finance and supply chain, CRM for customer management, HRIS for workforce operations, and countless other systems layered in between. Even during the automation wave around 2019, platforms such as UiPath promised to streamline these fragmented workflows. But those automations were still essentially human-built scripts — rigid, rule-based, and often expensive to maintain as systems, processes, and business rules changed.

That model is now being challenged by a new vision: the Enterprise AI Operating System. In this emerging paradigm, AI agents do more than answer questions or generate drafts — they operate the business itself. They access applications, complete multi-step workflows across CRM, ERP, HRIS, etc, make contextual decisions using enterprise data, and pursue defined goals with increasing autonomy, with humans stepping in only where oversight, judgment, or accountability is required. The harder problem that these platforms are racing to solve — is providing the glue: connecting fragmented systems, surfacing the right context to each agent, and enforcing the governance, permissions, and auditability that enterprise operations demand.

In this article, we explore this rapidly evolving market and examine how leading platforms — including OpenAI Frontier, Microsoft Copilot, Salesforce Agentforce, Moveworks (a ServiceNow company), Glean and Anthropic‘s Claude with its Cowork and Agent Teams offerings — are competing to become that essential glue layer. We look at their strategies, strengths, and limitations, and what organizations must consider as they move from isolated AI tools to a truly cohesive, agentic operating model.

OpenAI Frontier

What It Is

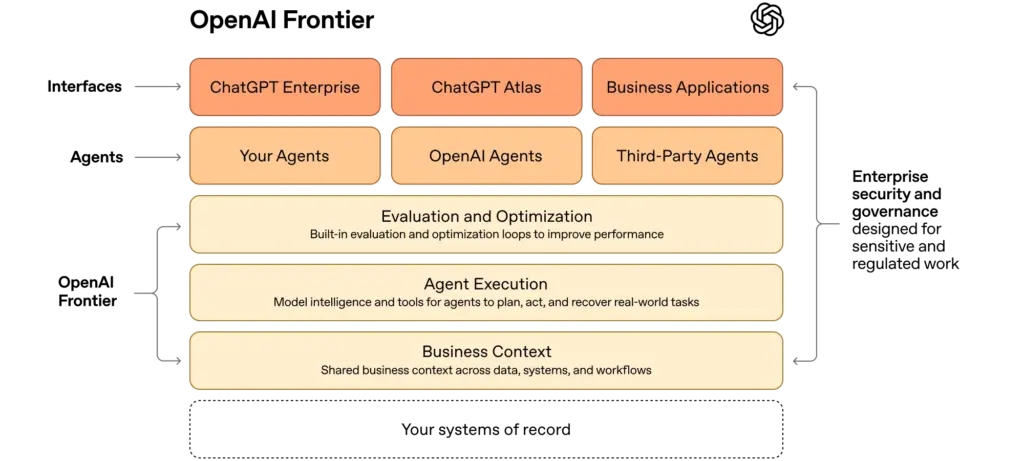

OpenAI Frontier, launched in February 2026, is an enterprise platform for building, deploying, and managing AI agents across business systems . It is important to understand what Frontier is not: it is not an AI agent itself, nor is it simply a chat interface like ChatGPT Enterprise. Frontier is the orchestration and governance layer that sits above agents — the infrastructure that gives those agents the shared context, permissions, and operational structure they need to do real work inside an organization.

The company describes Frontier as giving AI agents “the same skills people need to succeed at work: shared context, onboarding, hands-on learning with feedback, and clear permissions and boundaries”.

Philosophy

The foundational philosophy of OpenAI Frontier is that AI agents should be managed the way organizations manage people, not the way IT teams manage software. Most enterprise AI deployments today treat agents as sophisticated API calls — isolated, stateless, and disconnected from the broader organizational context. Frontier rejects this model entirely. Instead, it treats each AI agent as a digital coworker: an entity that needs to be onboarded, given institutional knowledge, held to performance standards, and trusted within defined boundaries.

This philosophy is operationalized through four core pillars:

Business Context — The Institutional Knowledge Layer

Frontier gives agents a shared understanding of the business by connecting siloed enterprise systems into one semantic context layer. This ensures every agent works from the same institutional memory and improves that knowledge over time through durable memory.

Agent Execution — The Operational Environment

Frontier enables agents to execute real work across complex, multi-step tasks by using tools, reasoning over data, and collaborating with humans or other agents. It also provides reliable runtime support—such as queuing, retries, and state persistence—across local, cloud, or OpenAI-hosted environments.

Evaluation and Optimization — The Feedback and Learning Loop

Frontier continuously measures agent performance so organizations can identify what is working, correct failures, and improve outcomes over time. This turns agents from static automations into systems that become more dependable through feedback and iteration.

Identity and Governance — The Trust and Compliance Layer

Each agent operates with a unique identity, role-based permissions, and full auditability, ensuring secure and controlled access to enterprise systems. This governance model makes large-scale deployment viable, especially in regulated environments, while meeting major enterprise security standards.

Together, these four pillars form a coherent operating model: agents that understand the business, can act within it, improve through experience, and operate within trusted boundaries.

| Strengths | Weaknesses |

| Strong OpenAI brand and enterprise credibility, with over 1 million businesses already using OpenAI products | Broad horizontal platform, but not deeply domain-specific out of the box — vertical tools like Agentforce or Kore.ai offer richer pre-built workflows for specific functions |

| Horizontal “OS” positioning means it can orchestrate agents across any department or business function | Requires significant implementation and customization effort; success depends heavily on consulting partners and services for scale |

| Open standards and multi-vendor orchestration (MCP support) allow management of agents built on Anthropic, Google, or custom models, reducing vendor lock-in concerns | Still newer to enterprise than incumbents like Microsoft, Salesforce, and ServiceNow, which have years of established enterprise relationships and integration depth |

| Tight access to OpenAI’s latest frontier models (GPT-5.x) with low-latency priority for production workloads | Availability and pricing transparency limited at launch — Frontier rolled out to a select group of customers, with broader access still pending |

| Governance, permissions, and enterprise controls are built into the platform architecture, not bolted on after the fact | Early documentation gaps in agent-specific security functionality — the main OpenAI security page did not even list Frontier as a product at launch |

Microsoft Copilot & Agent 365

What It Is

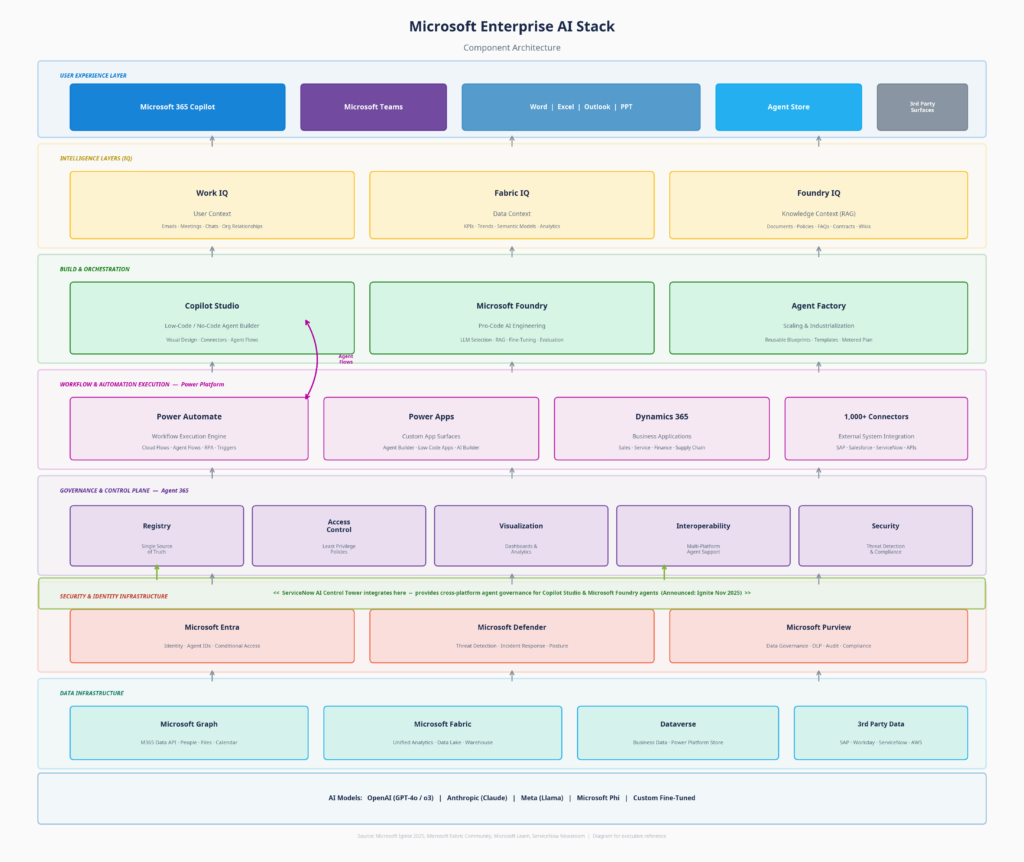

Microsoft’s Enterprise OS play is not a single product — it is a layered ecosystem that has evolved over time. At the surface sits Microsoft 365 Copilot, the AI assistant embedded in Word, Excel, Outlook, Teams, and other Microsoft 365 applications. Beneath that sits Copilot Studio, a low-code platform for building custom AI agents, alongside Microsoft Foundry for pro-code development. And at the foundation sits Agent 365, which serves as the control plane for deploying, organizing, and governing AI agents at enterprise scale.

Microsoft itself draws a clear distinction between these layers: “Copilot is your personal, private assistant that works solely for you, enhancing your capabilities. And agents are expert systems that operate autonomously on behalf of a process or company.” Or, put even more simply: “Microsoft 365 is designed for your users. Agent 365 is designed for your agents.”

Philosophy

Microsoft’s philosophy is rooted in a principle that none of its competitors can easily replicate: meet enterprises where they already are. Rather than asking enterprises to adopt an entirely new platform — as Frontier does — Microsoft’s approach is to extend the infrastructure that enterprises already use to manage people and apps, and apply it to agents. Agent 365 does not replace existing IT governance processes; it evolves them.

This philosophy is operationalized through a layered architecture:

Microsoft 365 Copilot — The Personal Assistant Layer

This is the personal AI assistant layer that helps individual employees draft documents, summarize meetings, analyze spreadsheets, and manage email. The Work IQ intelligence layer behind Copilot provides persistent memory across Microsoft 365 apps, understanding user roles, company structure, communication patterns, and project histories.

Copilot Studio & Power Platform — The Agent Builder and Execution Engine

Copilot Studio provides a low-code environment where business users and developers can create custom agents with defined knowledge sources, permitted actions, and conversation logic. Crucially, these agents execute their actions through Power Automate (via “Agent Flows”), connecting to external systems through over 1,000 connectors and APIs to actually perform work, not just recommend it.

Agent 365 & ServiceNow Integration — The Control Plane

Agent 365 is the native governance layer for the entire agent ecosystem. It provides five core capabilities: a registry that acts as a single source of truth for all agents in the organization (including “shadow agents”); access controls that enforce least-privilege permissions; visualization dashboards for observability; interoperability standards; and security integration with Defender and Purview.

Notably, recognizing that enterprises operate across multiple clouds, Microsoft announced at Ignite 2025 that it uses ServiceNow AI Control Tower to govern all its AI agents. This integration connects directly into Agent 365’s registry and interoperability layers, allowing organizations to monitor, secure, and govern Microsoft agents (built in Copilot Studio or Foundry) from ServiceNow’s unified, cross-platform pane of glass.

The IQ Layers — The Intelligence Fabric

Rather than a single context engine, Microsoft splits intelligence into three distinct layers: Work IQ (user context from emails and meetings), Fabric IQ (data context from analytics and KPIs), and Foundry IQ (knowledge context via RAG). Together, they give the entire ecosystem a shared understanding of organizational reality.

| Strengths | Weaknesses |

| Massive enterprise reach: With 400M+ paid users and roughly 90% of the Fortune 500 already using Copilot, Microsoft can expand adoption inside an existing customer base instead of winning a new platform battle. | Heavily Microsoft-centric: Although Agent 365 can govern third-party agents, it delivers the most value inside the Microsoft stack (Entra, Graph, Purview). Organizations built on Google or other ecosystems gain less benefit. |

| Built-in governance: Security, identity, and compliance are native through Entra, Defender, and Purview, making it especially attractive for regulated enterprises. | Built incrementally, not from scratch: The platform evolved from Copilot and Copilot Studio, so it can feel more like an extension of existing products than a unified enterprise AI operating system. |

| Consumer-to-enterprise path: Microsoft uniquely connects personal productivity assistants and enterprise agent orchestration in one ecosystem, enabling gradual adoption. | Product and licensing complexity: Copilot, Copilot Chat, Copilot Studio, Agent 365, Work IQ, and Foundry create overlap and confusion, especially for IT buyers. |

| Cross-platform governance via ServiceNow: The integration with ServiceNow AI Control Tower bridges the gap for enterprises that need to govern Microsoft agents alongside other vendors. | Limited native multi-cloud visibility: It governs well inside Microsoft natively, but relies on partners like ServiceNow for end-to-end visibility across agents spanning AWS, GCP, Snowflake, and Salesforce. |

The Workflow Natives: Salesforce & ServiceNow

These platforms represent a fundamentally different path to the Enterprise OS than OpenAI Frontier or Microsoft Copilot. Where Frontier was purpose-built as a horizontal orchestration layer from day one, and Microsoft extends an existing productivity suite into agent management, the “Workflow Natives” arrived at the Enterprise OS from the opposite direction: they started deep inside specific business functions and are expanding outward to orchestrate the broader enterprise.

Salesforce Agentforce

What It Is

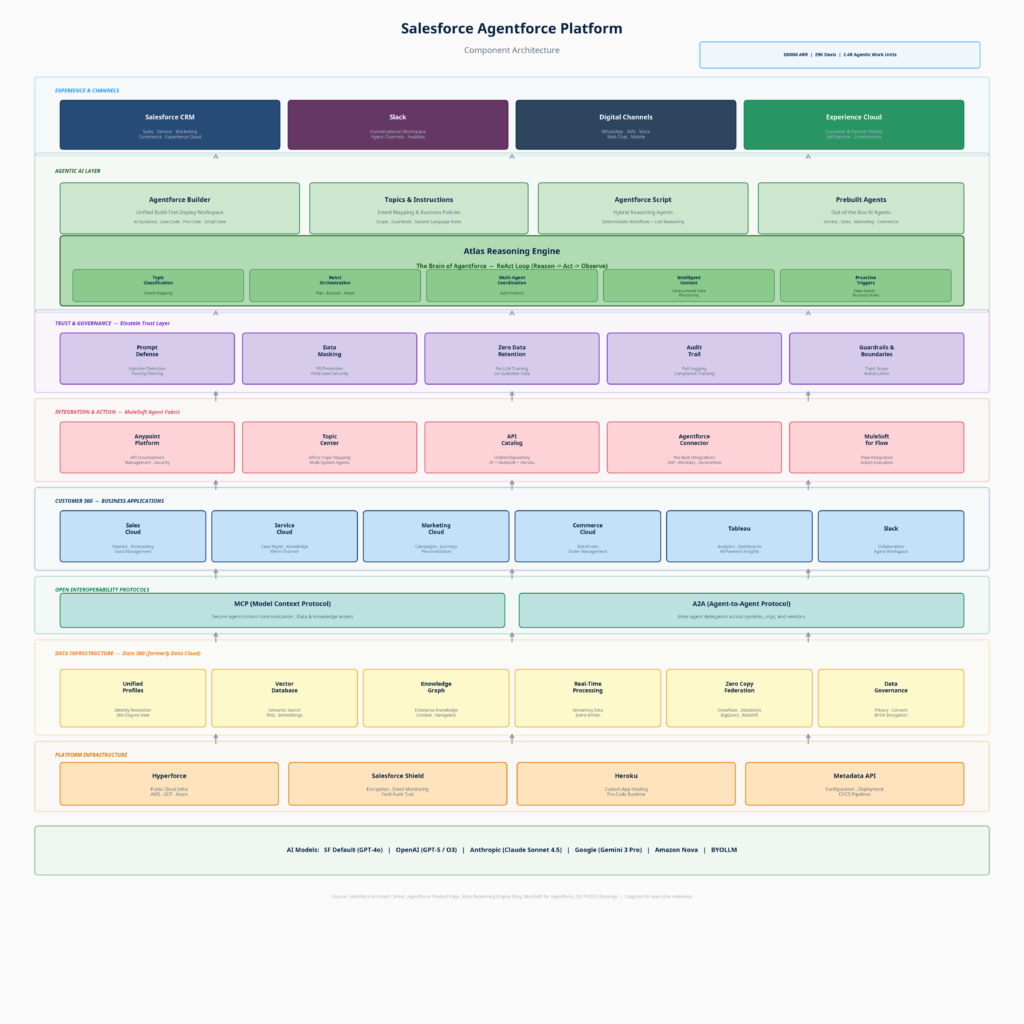

Agentforce is Salesforce’s end-to-end platform for designing, deploying, and governing AI agents across sales, service, marketing, commerce, and increasingly back-office operations. Built on the broader Salesforce ecosystem, it connects agents to customer and enterprise data through Data 360, executes workflows through MuleSoft Agent Fabric, reasons through the Atlas Reasoning Engine, and surfaces analytics through Tableau — all within a unified, deeply integrated stack. Notably, Salesforce has adopted open interoperability protocols — the Model Context Protocol (MCP) for secure agent-to-tool communication and the Agent-to-Agent (A2A) Protocol for inter-agent delegation across systems, organizations, and vendors — positioning Agentforce as one of the few enterprise platforms designed for multi-vendor agent ecosystems from inception.

Philosophy

Salesforce frames its philosophy around “digital labor”. Rather than positioning AI agents as assistants that help employees work faster, Salesforce treats them as autonomous digital workers who perform business functions on behalf of the organization — handling lead qualification, resolving customer issues, managing billing disputes — around the clock with minimal human intervention.

The Agentforce Platform operationalizes this through four layers:

Data 360 (The Context Layer): Unifies customer and enterprise data into a single semantic layer with identity resolution, a vector database for semantic search, knowledge graphs, and zero-copy federation with Snowflake, Databricks, BigQuery, and Redshift. The ~$8 billion Informatica acquisition further strengthened this layer with enterprise-grade data governance, quality, and metadata management.

Atlas Reasoning Engine (The Logic Layer): The brain of Agentforce, built on a ReAct (Reason → Act → Observe) loop — a continuous cycle where the agent classifies intent via Topics, plans actions, executes them, observes results, and adapts until the goal is fulfilled. Unlike simpler Chain-of-Thought approaches, Atlas supports proactive triggers — agents can be activated not just by user input but by data events and business rules (a case status change, an email received, a meeting starting) — making them genuinely autonomous rather than purely reactive. The engine also supports hybrid reasoning through Agentforce Script, pairing deterministic workflows with flexible LLM reasoning for predictable yet adaptive execution.

MuleSoft Agent Fabric (The Execution Layer): The automation and integration layer, connecting agents to any system through the Anypoint Platform, Topic Center (mapping APIs to agent actions), a unified API Catalog, and pre-built connectors for SAP, Workday, ServiceNow, and hundreds of other systems.

Customer 360 Apps (The Domain Layer): The specific applications — Sales Cloud, Service Cloud, Marketing Cloud, Commerce Cloud, Tableau, and Slack — that provide the domain context to make agents immediately useful within the workflows enterprises already run.

| Strengths | Weaknesses |

| Immediate time-to-value: Ships with over 200 pre-built templates and industry-specific agents, allowing production deployment in weeks rather than months. | Domain lock-in: Most powerful inside the Salesforce ecosystem; orchestrating workflows entirely outside Salesforce introduces latency and integration complexity — though MCP and A2A adoption is beginning to address this. |

| Data gravity: Holds the customer CRM data that matters. Agents natively draw on the full history of customer interactions already sitting in Salesforce. | Cost and complexity: Base pricing is high ($125–$150/user/month) plus consumption charges, requiring significant initial investment and professional services. |

| Model breadth: One of the most model-diverse enterprise platforms — officially supports GPT-5, Claude Sonnet 4.5, Gemini 3 Pro, Amazon Nova, and BYOLLM via Einstein Studio — giving enterprises flexibility to avoid single-model lock-in. | Integration risk: Expanding rapidly through massive acquisitions (Informatica, Apromore, Regrello), raising questions about long-term platform coherence. |

| Proven scale: Reached $800M in ARR by early 2026, closed 29,000 deals, processed over 2.4 billion agentic work units, with 60% of Q4 bookings coming from existing customer expansions — signaling deepening adoption, not just initial experimentation. | Emerging open protocols: MCP and A2A adoption is still early across the industry; the full promise of cross-vendor agent interoperability remains unproven at enterprise scale. |

ServiceNow (+ Moveworks)

What It Is

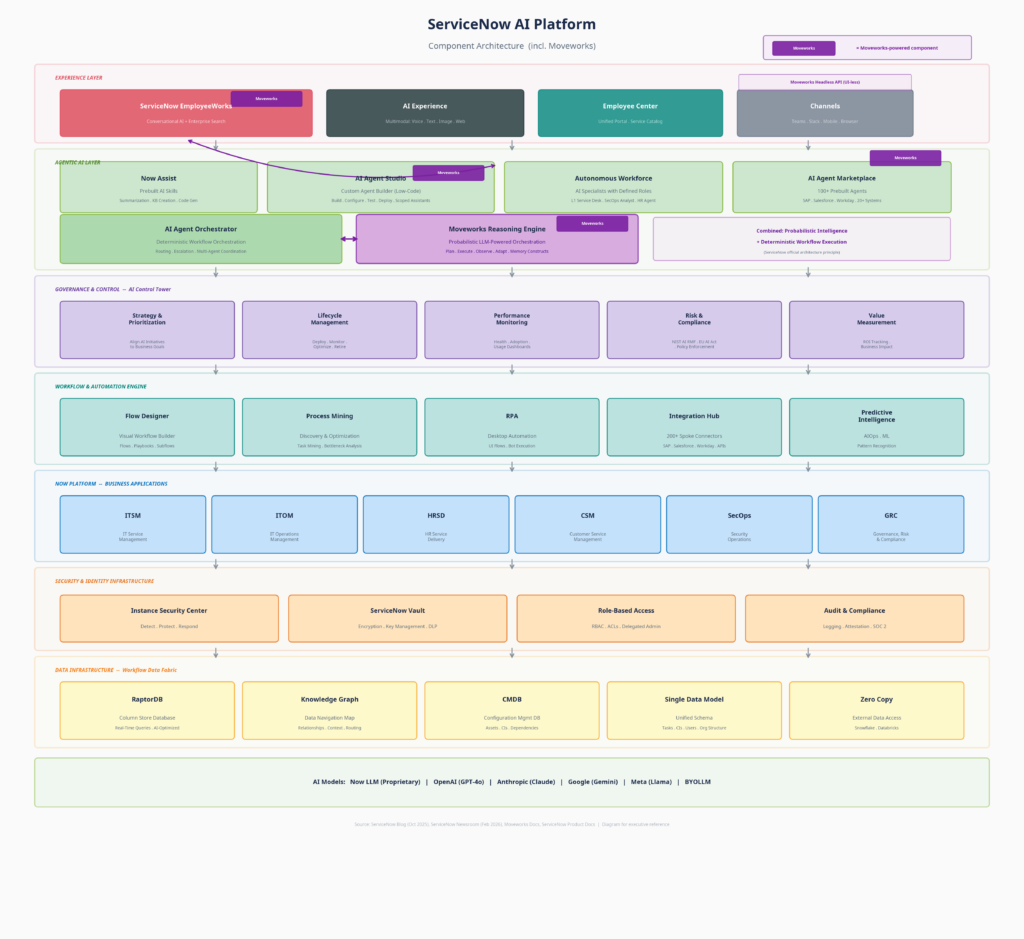

ServiceNow, which completed its $2.85 billion acquisition of Moveworks in December 2025, positions itself as the “AI control tower for business reinvention.” The ServiceNow AI Platform integrates with any cloud, any model, and any data source to orchestrate how work flows across the enterprise. Following a major update in February 2026, the combined platform now features the Autonomous Workforce (specialized AI agents with defined roles), EmployeeWorks (the Moveworks-powered conversational front door), and AI Control Tower for centralized governance. Its origin story is IT service management and back-office workflow automation — the middle and back office.

Philosophy

ServiceNow’s philosophy is grounded in the belief that AI agents are only as valuable as the workflows they operate within. Most enterprise AI pilots fail because there is no structured operational framework for the AI to reason against. ServiceNow argues that its 75 billion annual automated workflows across IT, HR, risk, and security operations provide the perfect foundation for enterprise AI to act upon.

The platform achieves “end-to-end agentic fulfillment” through a unique dual-engine architecture:

The Experience Layer (EmployeeWorks):

The conversational front door powered by Moveworks, providing an intuitive AI assistant that lets employees ask, search, and take action in natural language across multiple channels (Teams, Slack, portal).

The Dual Orchestration Layer (Probabilistic + Deterministic):

This is where the Moveworks acquisition fundamentally changed ServiceNow’s architecture. Rather than relying on a single engine, the platform combines two:

- Moveworks Reasoning Engine: A probabilistic LLM-powered engine that plans multi-step tasks, observes results, and adapts on the fly.

- AI Agent Orchestrator: ServiceNow’s native deterministic workflow engine (Flow Designer) that handles strict routing, escalation, and rules-based execution.

The Governance Layer (AI Control Tower)

A single pane of glass for CIOs to monitor, govern, and secure all AI agents — tracking ROI, enforcing NIST/EU AI Act compliance, and managing agent lifecycles. Notably, this governance layer is so robust that Microsoft announced at Ignite 2025 it would use ServiceNow AI Control Tower to govern its own Copilot Studio and Foundry agents.

| Strengths | Weaknesses |

| Workflow foundation: Agents come pre-connected to decades of structured workflow automation, providing the operational plumbing that AI needs to actually execute tasks. | Top-down complexity: Governance capabilities are designed for huge organizations already deeply invested in ServiceNow, leaving a gap for department-level teams. |

| Probabilistic + Deterministic engine: The Moveworks acquisition perfectly married flexible LLM reasoning with strict, auditable back-end workflow execution. | Integration maturity: While the vision is clear, the Moveworks acquisition only closed in late 2025; the full technical integration of the combined platform is still in its early phases. |

| Cross-platform governance: AI Control Tower’s ability to govern third-party agents (validated by Microsoft’s adoption) positions ServiceNow as a true multi-cloud OS. | Competitive overlap: Aggressively expanding into CRM and customer service, creating strategic friction for enterprises that also run Salesforce. |

Conclusion:

Not every platform fits neatly into Enterprise OS category. Glean has carved out a distinct position by building what may be the most underestimated layer of the Enterprise OS: context itself — a proprietary knowledge graph that maps relationships between people, documents, conversations, and systems across the enterprise, positioning it as a context substrate that sits beneath any agent framework rather than competing with one directly. Anthropic Claude is quietly expanding into its own enterprise surface through Agent Teams and Cowork — suggesting Anthropic is exploring what it looks like to be the Enterprise OS itself.

Every player covered in this article is converging on the same destination: emulating how humans work inside organizations. The logical terminus is not AI-assisted enterprise software — it is Labor as a Service: digital workers deployed, governed, evaluated, and scaled alongside their human counterparts. The platforms that win will be the ones that make that model not just technically possible, but organizationally trustworthy.

Comments

Leave a Comment

No comments yet. Be the first to comment!

Written by Prasanth Sai

Gen AI Product Leader · Leads AI Applications and Search at eGain

I partner with PMs and engineers to drive production adoption of AI across Fortune 500 enterprises in the US and Europe. IIT Bombay alumnus; previously co-founded Selekt.in and built ChatGen.ai. The thesis I evangelize: knowledge is the harness for AI applications.